Kubernetes Blog The Kubernetes blog is used by the project to communicate new features, community reports, and any news that might be relevant to the Kubernetes community.

-

From Kubernetes Dashboard to Headlamp: Understanding the Transition

on June 1, 2026 at 6:00 pm

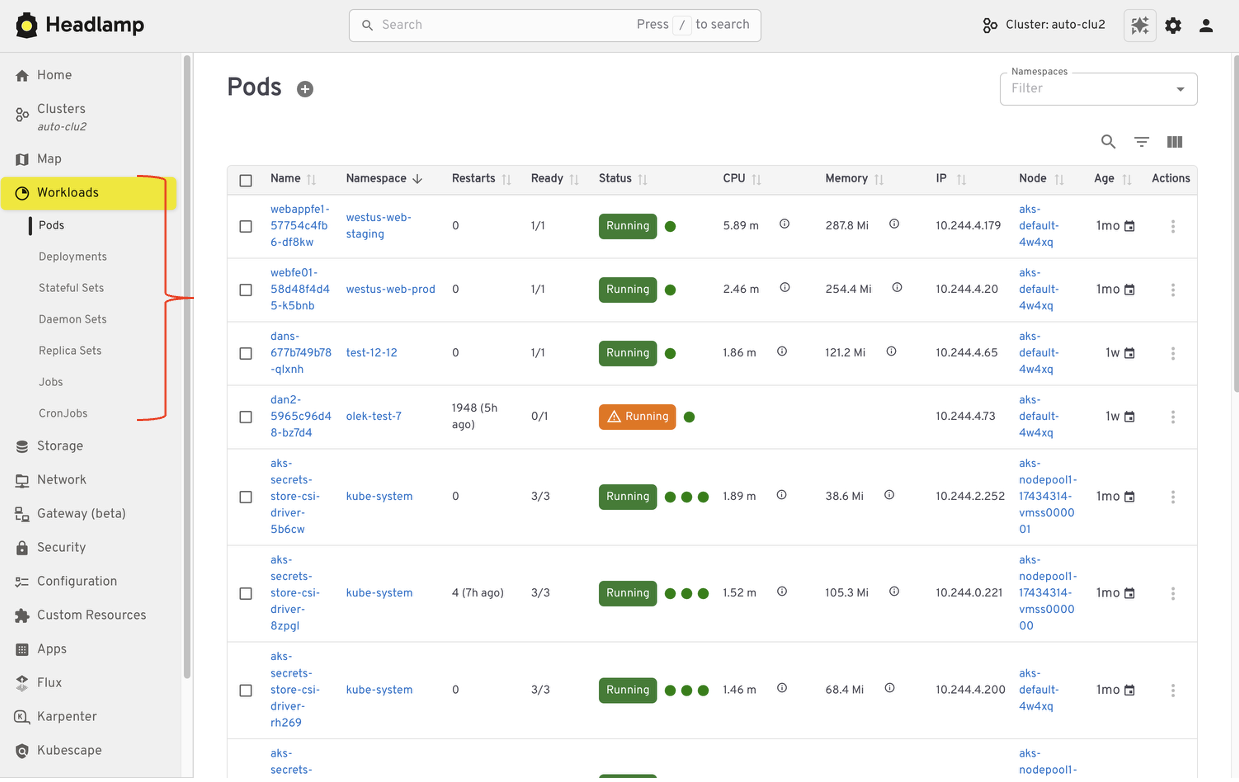

For many people, Kubernetes Dashboard was their first window into Kubernetes. It offered a simple visual way to see what was running in a cluster, inspect resources, and build confidence without relying on the command line. For years, it helped developers, students, and operators make sense of Kubernetes, and it served as an important onramp into the ecosystem. The Kubernetes Dashboard project has now been archived. We deeply respect the work the team did and the role Dashboard played in making Kubernetes more approachable for so many users. Headlamp builds on that foundation and carries it forward. It keeps the clarity of a visual interface while adding capabilities that match how Kubernetes is used today. This includes multi-cluster visibility, application-centric views, extensibility through plugins, and flexible deployment options that work both in-cluster and on the desktop. This guide is meant to help you navigate that transition with confidence. Before diving into the mechanics of migration, we start with familiar ground by looking at how common Kubernetes Dashboard workflows map to Headlamp. We also cover what stays the same and what improves after the switch. The goal is not just to replace a tool, but to honor a user-centered legacy and help you land in a UI that can grow with you as your Kubernetes usage evolves. Mapping Kubernetes Dashboard workloads to HeadlampIf you have used Kubernetes Dashboard before, many workflows in Headlamp will feel familiar. Headlamp does not introduce a new way of thinking. Instead, it builds on workloads users already know and extends them in practical ways. The focus is continuity. What worked before still works, with more room to grow. Viewing workloads and resourcesIn Kubernetes Dashboard, most users started by browsing workloads like pods, deployments, services, and namespaces. Headlamp keeps this same starting point. Workloads are easy to find and inspect, and moving between namespaces and clusters is simpler. Resources are still organized in familiar ways, and navigation feels smoother, especially when you work across multiple environments. Editing and interacting with resourcesLike Kubernetes Dashboard, Headlamp lets you view and edit manifests directly in the UI based on your permissions. You can delete resources, scale workloads, or update configurations from the interface. All actions follow standard Kubernetes RBAC. If you could perform an action in Dashboard, you will find the same capability in Headlamp, with the same respect for access controls. Understanding relationshipsWhere Headlamp begins to expand the experience is in how it presents relationships between resources. In addition to list views, Headlamp offers visual ways to see how workloads, services, and configurations connect. This helps provide context without changing the underlying workloads users already rely on. At a high level, the tasks you performed in Kubernetes Dashboard are still there. Headlamp keeps familiar workflows while making it easier to scale as clusters, teams, and applications grow. Where Headlamp goes beyond Kubernetes DashboardExpanding from single cluster to multi-cluster workflowsKubernetes Dashboard was designed to work with one cluster at a time. That model worked well for simple setups, but it became limiting as teams adopted multiple environments. Headlamp expands this view by letting you work with multiple clusters from a single interface without switching tools or losing context. This makes it easier to manage development, staging, and production environments side by side. For teams running Kubernetes in more than one place, this shift reduces friction. You can stay oriented and move between clusters with confidence. From resource lists to application context with ProjectsProjects give you an application-centered way to view Kubernetes. Instead of jumping between lists, you can group related workloads, services, and supporting resources in one place. This makes applications easier to understand. You can see what belongs together, track changes in context, and troubleshoot without scanning the cluster piece by piece. Projects are built on native Kubernetes concepts. Namespaces, labels, and RBAC continue to work the same way they always have. Headlamp adds a visual layer that brings related resources together. Projects are optional. You can still work at the individual resource level when that fits your task. When you need more context, Projects help you step back and see the bigger picture. Extend the Headlamp UI with pluginsHeadlamp can be extended through plugins that bring common workflows directly into the UI. Instead of switching tools, you work in one place with the same context. For example, the Flux plugin brings GitOps workflows into Headlamp. It allows teams to view application state alongside the Kubernetes resources that Flux manages, making it easier to understand how changes in Git relate to what is running in the cluster. The AI Assistant follows a similar pattern. It adds a conversational layer to the UI that helps users understand what they are seeing, troubleshoot issues, or take action. All of this happens in the same screen where the problem appears. Building your own pluginsPlugins are optional and not limited to community-built extensions. Platform and project teams can also create their own plugins. This allows organizations to add custom integrations that match their specific workflows and internal tooling, while keeping the user experience consistent. Choosing how and where Headlamp runsHeadlamp gives teams flexibility in how they use a Kubernetes UI. You can run it directly in a cluster, use it as a desktop application, or combine both approaches based on your needs. Running Headlamp in-cluster works well for shared environments. It provides a centrally managed UI with controlled access and fits naturally into Kubernetes setups, following the same authentication and RBAC rules as other in-cluster components. The desktop application is often a better fit for local development and onboarding. It also works well when you need to manage multiple clusters from one place. Users can connect using their existing kubeconfig without deploying anything into the cluster. These options are not mutually exclusive. Many teams use the desktop app for day-to-day work, while relying on an in-cluster deployment for shared or production environments. Preparing for the MigrationBefore moving from Kubernetes Dashboard to Headlamp, it can be helpful to pause and take stock of how you use the Dashboard today. A little reflection up front can go a long way toward making the transition feel smooth and familiar. Start by noting which clusters and namespaces you access and how authentication works. Headlamp relies on standard Kubernetes authentication and RBAC. In most cases, existing access models carry over without change. If users already connect using kubeconfig files or service accounts, they will be able to access the same resources in Headlamp. It is also useful to think about the workflows that matter most to your team. Some users rely on Dashboard for quick inspection or troubleshooting, while others use it for lightweight edits or validation. Headlamp supports these same workflows and adds optional capabilities on top. Knowing what you rely on today helps the transition feel predictable and confidence building. If you would like to explore Headlamp or try it out before migrating, you can learn more at headlamp.dev. This blog focused on understanding the transition and what to expect. A step by step migration guide is coming soon and will walk through installation and migration in detail.

-

Reconciling the Past: Correcting Records for Unfixed Kubernetes CVEs

on May 26, 2026 at 5:30 pm

The Kubernetes project relies on transparency to empower cluster administrators and security researchers. One important way we do that is by publishing CVE records into the Common Vulnerabilities and Exposures database. As part of our ongoing effort to mature the official Kubernetes CVE Feed, we have identified some discrepancies. CVE records for a few older, unfixed issues incorrectly include a fixed version field. The Kubernetes Security Response Committee (SRC) will correct the affected CVE records on June 1, 2026. This may result in vulnerability scanners identifying these vulnerabilities in places where they were previously not detected. To help reduce confusion, this post provides a technical update on three vulnerabilities that were disclosed in previous years but remain unfixed: CVE-2020-8561, CVE-2020-8562, and CVE-2021-25740. Why we are updating these records nowWhile these vulnerabilities have been public for several years, the recent work to generate official Open Source Vulnerabilities (OSV) files revealed that their corresponding CVE records did not accurately reflect their status. Specifically, some records suggested a fixed version existed, when in reality, these issues are architectural design trade-offs that cannot be fully remediated through code without breaking fundamental Kubernetes functionality. Correcting these records is vital for the community for: Automation Fidelity: Modern vulnerability scanners depend on precise version ranges. Inaccurate fixed tags lead to false negatives, giving users a false sense of security. Risk Documentation: By formalizing these as unfixed, we ensure that platform providers and administrators are aware of the persistent need for administrative mitigations. For completeness, we should also mention that CVE-2020-8554 is an unfixed CVE with a correct CVE record stating that it affects all versions. That record will also be updated to use a more-standardized version number format. Technical analysis of unfixed architectural risksThe following vulnerabilities will not be fixed by the Kubernetes project. GitHub issues remain the best reference for the technical mechanics of these flaws. CVE-2020-8561: Webhook redirect in kube-apiserver Severity: Medium (4.1). The Issue: The kube-apiserver follows HTTP redirects when communicating with admission webhooks. An actor capable of configuring an AdmissionWebhookConfiguration can redirect API server requests to internal, private networks. Why it remains unfixed: Restricting this behavior would require breaking the standard HTTP client behavior that many legitimate integrations rely on. Mitigation: Set the API server log level to less than 10 (to prevent logging response bodies) and disable dynamic profiling (–profiling=false) to prevent unauthorized log-level changes. CVE-2020-8562: Proxy bypass via DNS TOCTOU Severity: Low (3.1). The Issue: A Time-of-Check to Time-of-Use (TOCTOU) race condition in the API server proxy allows users to bypass IP restrictions. The system performs a DNS check to validate an IP, but then performs a second resolution for the actual connection, which an attacker can manipulate. Why it remains unfixed: Fixing this requires pinning resolved IPs in a way that breaks complex split-horizon DNS or dynamic IP environments. Mitigation: Use a local DNS caching server like dnsmasq for the API server and configure min-cache-ttl to enforce consistent responses between the check and the connection. CVE-2021-25740: Cross-namespace forwarding via Endpoints Severity: Low (3.1). The Issue: A design flaw in the Endpoints and EndpointSlice API objects allows users to manually specify IP addresses, which can be used to point a LoadBalancer or Ingress toward backends in other namespaces. Why it remains unfixed: This is a fundamental feature of the Endpoints API used by many networking tools and operators. Mitigation: Restrict write access to Endpoints (legacy) and EndpointSlices. Since Kubernetes 1.22, Kubernetes RBAC authorization mode no longer includes those permissions in the default edit and admin ClusterRoles. That removal applies to clusters created using Kubernetes v1.22; for clusters upgraded from older versions, administrators should manually audit and reconcile the system:aggregate-to-edit ClusterRole. Note:On June 1, 2026, these CVE records will be updated to correctly reflect the fact that all versions are affected. You may see them begin to appear in vulnerability scanner results. Required actions for administratorsThe Kubernetes project recommends a secure by configuration approach to manage these persistent risks: Vulnerability Action item Severity score (Rating) Command / configuration CVE-2020-8561 Restrict Log Verbosity 4.1 (Medium) Ensure –v is set to < 10 and –profiling=false. CVE-2020-8562 Enforce DNS Consistency 3.1 (Low) Deploy dnsmasq or a similar caching resolver on control plane nodes. CVE-2021-25740 Hardened RBAC 3.1 (Low) kubectl auth reconcile to remove Endpoints write access from broad roles. The RBAC action for CVE-2021-25740 applies when your cluster uses RBAC authorization mode, which is the default for clusters created with standard Kubernetes tooling. Administrators should independently test and validate these configurations in a non-production environment, assessing the architectural risks against their specific threat model and risk tolerance. Conclusion: maturity through transparencyThe effort to reconcile these records is a sign of a maturing security ecosystem. By moving away from the “patch-only” mindset and accurately documenting architectural debt, the Kubernetes project provides the community with the high-fidelity data needed to secure modern cloud native infrastructure. We would like to thank the security researchers—QiQi Xu, Javier Provecho, and others—who identified these risks, and the SIG Security Tooling contributors who continue to refine our official feeds. Special shoutout to Rory McCune for sharing information around these CVEs through his blog posts. Update 2026/06/01: Today, the Kubernetes SRC has updated the CVE records for CVE-2020-8554, CVE-2020-8561, CVE-2020-8562, and CVE-2021-25740.

-

Announcing etcd 3.7.0-beta.0

on May 20, 2026 at 12:00 am

SIG-Etcd announces the availability of the first beta release of etcd v3.7.0. This new version of the popular distributed database and key Kubernetes component includes the long-requested RangeStream feature, as well as a refactoring and cleanup of multiple legacy components and interfaces. v3.7 will deliver improved security, better operational reliability, and an improved experience for working with large resultsets. First, however, the project needs users to test the beta. You can find v3.7.0-beta.0 here: Source code Binaries Official container images Please try it out and report issues in the etcd repo. This beta also determines the EOL of version 3.4. RangeStreamIn etcd v3.6 and earlier, it is challenging to work with requests that return large resultsets. The client or requesting application is forced to wait for the full result set, leading to unpredictable latency and memory usage. The RangeStream RPC lets calling applications accept result sets in chunks, reducing latency and making buffering memory usage more predictable. Much of the work on RangeStream was done by a relatively new contributor to etcd, Jeffrey Ying, a software engineer at Google. New contributors can have a substantial impact on etcd development. “I’ve always been fascinated by database internals, and building RangeStream was a great opportunity to solve a bottleneck we were hitting in production with Kubernetes. It was the perfect opportunity to collaborate across projects and improve the ecosystem as a whole. Jumping into etcd as a new contributor had a bit of a learning curve, but the community is incredibly welcoming. The leads were very receptive to my ideas and helped me iterate quickly, while maintaining the project’s high bar for reliability and code quality,” said Jeffrey. Instructions on how to use RangeStream in gRPC calls and in etcdctl can be found in the etcd documentation. Users should try it out for their own applications. Removal of v2storeThe last vestiges of etcd v2store have been removed in v3.7, making this the first release that is 100% on v3store. This includes discovery, bootstrap, v2 requests, and the v2 client. Our team has also removed multiple deprecated experimental flags. All of these changes may create some breakage for users, particularly those who have not already updated to v3.6.11. We are interested in hearing about blockers encountered by users and dependent applications; please report anything you find that can’t be remedied or needs better upgrade documentation. etcd v3.7.0-beta.0 also includes bbolt v1.5.0 and raft v3.7.0. 3.4 EOLAccording to our community support policy, we typically maintain only the latest two minor versions, currently v3.6 and v3.5. Etcd v3.5 will be supported for 1 year after v3.7.0 final release. As mentioned in extended support for v3.4 in the etcd v3.6.0 release announcement, etcd v3.4 has been EOL since May 15, 2026. SIG-etcd may release one more security patch for that version at the end of May, if warranted by patched vulnerabilities. In any case, it will cease being updated after the end of May. Users on v3.4 should be planning to upgrade their clusters. Feedback and Future BetasReach the etcd contributors with your feedback about v3.7.0-beta.0 in any of the following places: Github issues #SIG-etcd slack channel in Kubernetes Slack etcd-dev mailing list SIG-etcd may release additional betas of version v3.7.0 with additional refactoring, particularly of our use of protobuf libraries. Release candidates and the final release will probably happen through June, possibly into early July.

-

Kubernetes v1.36: New Metric for Route Sync in the Cloud Controller Manager

on May 15, 2026 at 6:35 pm

This article was originally published with the wrong date. It was later republished, dated the 15th of May 2026. Kubernetes v1.36 introduces a new alpha counter metric route_controller_route_sync_total to the Cloud Controller Manager (CCM) route controller implementation at k8s.io/cloud-provider. This metric increments each time routes are synced with the cloud provider. A/B testing watch-based route reconciliationThis metric was added to help operators validate the CloudControllerManagerWatchBasedRoutesReconciliation feature gate introduced in Kubernetes v1.35. That feature gate switches the route controller from a fixed-interval loop to a watch-based approach that only reconciles when nodes actually change. This reduces unnecessary API calls to the infrastructure provider, lowering pressure on rate-limited APIs and allowing operators to make more efficient use of their available quota. To A/B test this, compare route_controller_route_sync_total with the feature gate disabled (default) versus enabled. In clusters where node changes are infrequent, you should see a significant drop in the sync rate with the feature gate turned on. Example: expected behaviorWith the feature gate disabled (the default fixed-interval loop), the counter increments steadily regardless of whether any node changes occurred: # After 10 minutes with no node changes route_controller_route_sync_total 60 # After 20 minutes, still no node changes route_controller_route_sync_total 120 With the feature gate enabled (watch-based reconciliation), the counter only increments when nodes are actually added, removed, or updated: # After 10 minutes with no node changes route_controller_route_sync_total 1 # After 20 minutes, still no node changes — counter unchanged route_controller_route_sync_total 1 # A new node joins the cluster — counter increments route_controller_route_sync_total 2 The difference is especially visible in stable clusters where nodes rarely change. Where can I give feedback?If you have feedback, feel free to reach out through any of the following channels: The #sig-cloud-provider channel on Kubernetes Slack The KEP-5237 issue on GitHub The SIG Cloud Provider community page for other communication channels How can I learn more?For more details, refer to KEP-5237.

-

Kubernetes v1.36: Mixed Version Proxy Graduates to Beta

on May 15, 2026 at 6:00 pm

Back in Kubernetes 1.28, we introduced the Mixed Version Proxy (MVP) as an Alpha feature (under the feature gate UnknownVersionInteroperabilityProxy) in a previous blog post. The goal was simple but critical: make cluster upgrades safer by ensuring that requests for resources not yet known to an older API server are correctly routed to a newer peer API server, instead of returning an incorrect 404 Not Found. We are excited to announce that the Mixed Version Proxy is moving to Beta in Kubernetes 1.36 and will be enabled by default! The feature has evolved significantly since its initial release, addressing key gaps and modernizing its architecture. Here is a look at how the feature has evolved and what you need to know to leverage it in your clusters. What problem are we solving?In a highly available control plane undergoing an upgrade, you often have API servers running different versions. These servers might serve different sets of APIs (Groups, Versions, Resources). Without MVP, if a client request lands on an API server that does not serve the requested resource (e.g., a new API version introduced in the upgrade), that server returns a 404 Not Found. This is technically incorrect because the resource is available in the cluster, just not on that specific server. This can lead to serious side effects, such as mistaken garbage collection or blocked namespace deletions. MVP solves this by proxying the request to a peer API server that can serve it. sequenceDiagram participant Client participant API_Server_A as API Server A (Older/Different) participant API_Server_B as API Server B (Newer/Capable) Client->>API_Server_A: 1. Request for Resource (e.g., v2) Note over API_Server_A: Determines it cannot serve locally API_Server_A->>API_Server_A: 2. Looks up capable peer in Discovery Cache API_Server_A->>API_Server_B: 3. Proxies request (adds x-kubernetes-peer-proxied header) API_Server_B->>API_Server_B: 4. Processes request locally API_Server_B–>>API_Server_A: 5. Returns Response API_Server_A–>>Client: 6. Forwards Response How has it evolved since 1.28The initial Alpha implementation was a great proof of concept, but it had some limitations and relied on older mechanisms. Here is how we have modernized it for Beta: From StorageVersion API to Aggregated Discovery In the Alpha version, API servers relied on the StorageVersion API to figure out which peers served which resources. While functional, this approach had a significant limitation: the StorageVersion API is not yet supported for CRDs and aggregated APIs. For Beta, we have replaced the reliance on StorageVersion API calls with the use of Aggregated Discovery. API servers now use the aggregated discovery data to dynamically understand the capabilities of their peers. The Missing Piece: Peer-Aggregated Discovery The 1.28 blog post noted a significant gap: while we could proxy resource requests, discovery requests still only showed what the local API server knew about. In 1.36, we have added Peer-Aggregated Discovery support! Now, when a client performs discovery (e.g., listing available APIs), the API server merges its local view with the discovery data from all active peers. This provides clients with a complete, unified view of all APIs available across the entire cluster, regardless of which API server they connected to. sequenceDiagram participant Client participant API_Server_A as API Server A participant API_Server_B as API Server B Client->>API_Server_A: 1. Request Discovery Document API_Server_A->>API_Server_A: 2. Gets Local APIs API_Server_A->>API_Server_B: 3. Gets Peer APIs (Cached or Direct) API_Server_A->>API_Server_A: 4. Merges and sorts lists deterministically API_Server_A–>>Client: 5. Returns Unified Discovery Document While peer-aggregated discovery will be the default behavior (note that peer-aggregated discovery is enabled if the –peer-ca-file flag is set, otherwise the server will fallback to showing only its local APIs), there may be cases where you need to inspect only the resources served by the specific API server you are connected to. You can request this non-aggregated view by including the profile=nopeer parameter in your request’s Accept header (e.g., Accept: application/json;g=apidiscovery.k8s.io;v=v2;as=APIGroupDiscoveryList;profile=nopeer). Required configurationWhile the feature gate will be enabled by default, it requires certain flags to be set to allow for secure communication between peer API servers. To function correctly, make sure your API server is configured with the following flags: –feature-gates=UnknownVersionInteroperabilityProxy=true: This will be default in 1.36, but it is good to verify –peer-ca-file=<path-to-ca>: [CRITICAL] This is a required flag. You must provide the CA bundle that the source API server will use to authenticate the serving certificates of destination peer API servers. Without this, proxying will fail due to TLS verification errors. –peer-advertise-ip and –peer-advertise-port: These flags are used to set the network address that peers should use to reach this API server. If unset, the values from –advertise-address or –bind-address are used. If you have complex network topologies where API servers communicate over a specific internal interface, setting these flags explicitly is highly recommended. Configuring with kubeadmIf you manage your cluster with kubeadm, you can configure these flags in your ClusterConfiguration file: apiVersion: kubeadm.k8s.io/v1beta4 kind: ClusterConfiguration apiServer: extraArgs: peer-ca-file: “/etc/kubernetes/pki/ca.crt” # peer-advertise-ip and port if needed Call to actionIf you are running multi-master clusters and upgrading them regularly, the Mixed Version Proxy is a major safety improvement. With it becoming default in 1.36, we encourage you to: Review your API server flags to ensure –peer-ca-file is set properly. Test the feature in your staging environments as you prepare for the 1.36 upgrade. Provide feedback to SIG API Machinery (Slack, mailing list, or by attending SIG API Machinery meetings) on your experience.